Thanks to everyone who stopped by to say hi at the

Search Engine Strategies conference in San Jose last week!

I had a great time meeting people and talking about our new webmaster tools. I got to hear a lot of feedback about what webmasters liked, didn't like, and wanted to see in our

Webmaster Central site. For those of you who couldn't make it or didn't find me at the conference, please feel free to post your comments and suggestions in our

discussion group. I do want to hear about what you don't understand or what you want changed so I can make our webmaster tools as useful as possible.

Some of the highlights from the week:

This year, Danny Sullivan invited some of us from the team to "chat and chew" during a lunch hour panel discussion. Anyone interested in hearing about Google's webmaster tools was welcome to come and many did -- thanks for joining us! I loved showing off our product, answering questions, and getting feedback about what to work on next. Many people had already tried Sitemaps, but hadn't seen the new features like

Preferred domain and

full crawling errors.

One of the questions I heard more than once at the lunch was about how big a Sitemap can be, and how to use Sitemaps with very large websites. Since Google can handle all of your URLs, the goal of Sitemaps is to tell us about all of them. A Sitemap file can contain up to 50,000 URLs and should be no larger than 10MB when uncompressed. But if you have more URLs than this, simply break them up into several smaller Sitemaps and tell us about them all. You can create a

Sitemap Index file, which is just a list of all your Sitemaps, to make managing several Sitemaps a little easier.

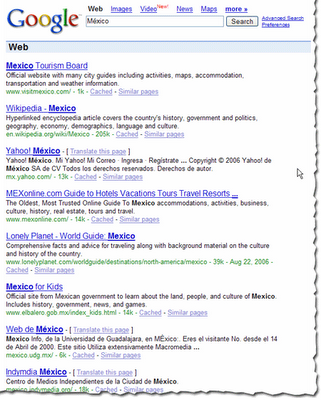

While hanging out at the Google booth I got another interesting question: One site owner told me that his site is listed in Google, but its description in the search results wasn't exactly what he wanted. (We were using the description of his site listed in the

Open Directory Project.) He asked how to remove this description from Google's search results.

Vanessa Fox knew the answer! To specifically prevent Google from using the Open Directory for a page's title and description,

use the following meta tag:

<meta name="GOOGLEBOT" content="NOODP">

My favorite panel of the week was definitely

Pimp My Site. The whole group was dressed to match the theme as they gave some great advice to webmasters.

Dax Herrera, the coolest "pimp" up there (and a fantastic piano player), mentioned that a lot of sites don't explain their product clearly on each page. For instance, when pimping

Flutter Fetti, there were many instances when all the site had to do was add the word "confetti" to the product description to make it clear to search engines and to users reaching the page exactly what a Flutter Fetti stick is.

Another site pimped was a

Yahoo! Stores web site. Someone from the audience asked if the webmaster could set up a Google Sitemap for their store. As

Rob Snell pointed out, it's very simple: Yahoo! Stores will

create a Google Sitemap for your website automatically, and even verify your ownership of the site in our webmaster tools.

Finally, if you didn't attend the Google dance, you missed out! There were Googlers dancing, eating, and having a great time with all the conference attendees. Vanessa Fox represented my team at the Meet the Google Engineers hour that we held during the dance, and I heard Matt Cutts even starred in a music video! While demo-ing Webmaster Central over in the labs area, someone asked me about the ability to share site information across multiple accounts. We associate your

site verification with your Google Account, and allow multiple accounts to verify ownership of a site independently. Each account has its own verification file or meta tag, and you can remove them at any time and re-verify your site to revoke verification of a user. This means that your marketing person, your techie, and your SEO consultant can each verify the same site with their own Google Account. And if you start managing a site that someone else used to manage, all you have to do is add that site to your account and verify site ownership. You don't need to transfer the account information from the person who previously managed it.

Thanks to everyone who visited and gave us feedback. It was great to meet you!